Transfer Learning has emerged as a transformative approach in the realm of machine learning and deep learning, enabling more efficient and adaptable model training. Here’s an in-depth exploration of this concept.

Concept

Transfer Learning is a technique where a model developed for a specific task is repurposed for a related but different task. This method is particularly efficient as it allows the model to utilize its previously acquired knowledge, significantly reducing the need for training from the ground up. This approach not only saves time but also leverages the rich learning obtained from previous tasks.

Reuse of Pre-trained Models

A major advantage of transfer learning is its ability to use pre-trained models. These models, trained on extensive datasets, contain a wealth of learned features and patterns, which can be effectively applied to new tasks. This reuse is especially beneficial in scenarios where training data is limited or when the new task is somewhat similar to the one the model was originally trained on.

Enhanced Learning Efficiency

Transfer learning greatly reduces the time and resources required for training new models. By leveraging existing models, it circumvents the need for extensive computation and large datasets, which is a boon in resource-constrained scenarios or when dealing with rare or expensive-to-label data.

-

Reduced Training Time: One of the primary benefits of transfer learning is the substantial reduction in training time. By using models pre-trained on large datasets, a significant portion of the learning process is already completed. This means that less time is needed to train the model on the new task.

-

Lower Resource Requirements: Transfer learning mitigates the need for powerful computational resources that are typically required for training complex models from scratch. This aspect is especially advantageous for individuals or organizations with limited access to high-end computing infrastructure.

-

Efficient Data Utilization: In scenarios where acquiring large amounts of labeled data is challenging or costly, transfer learning proves to be particularly beneficial. It allows for the effective use of smaller datasets, as the pre-trained model has already learned general features from a broader dataset.

-

Quick Adaptation to New Tasks: Transfer learning enables models to quickly adapt to new tasks with minimal additional training. This quick adaptation is crucial in dynamic fields where rapid deployment of models is required.

-

Overcoming Data Scarcity: For tasks where data is scarce or expensive to collect, transfer learning offers a solution by utilizing pre-trained models that have been trained on similar tasks with abundant data. This approach helps in overcoming the hurdle of data scarcity [[6†source]].

-

Improved Model Performance: Often, models trained with transfer learning exhibit improved performance on new tasks, especially when these tasks are closely related to the original task the model was trained on. This improved performance is due to the pre-trained model’s ability to leverage previously learned patterns and features.

Applications

The applications of transfer learning are vast and varied. It has been successfully implemented in areas such as image recognition, where models trained on generic images are fine-tuned for specific image classification tasks, and natural language processing, where models trained on one language or corpus are adapted for different linguistic applications. Its versatility makes it a valuable tool across numerous domains.

Adaptability

Transfer learning exhibits remarkable adaptability, being applicable to a wide array of tasks and compatible with various types of neural networks. Whether it’s Convolutional Neural Networks (CNNs) for visual data or Recurrent Neural Networks (RNNs) for sequential data, transfer learning can enhance the performance of these models across different domains.

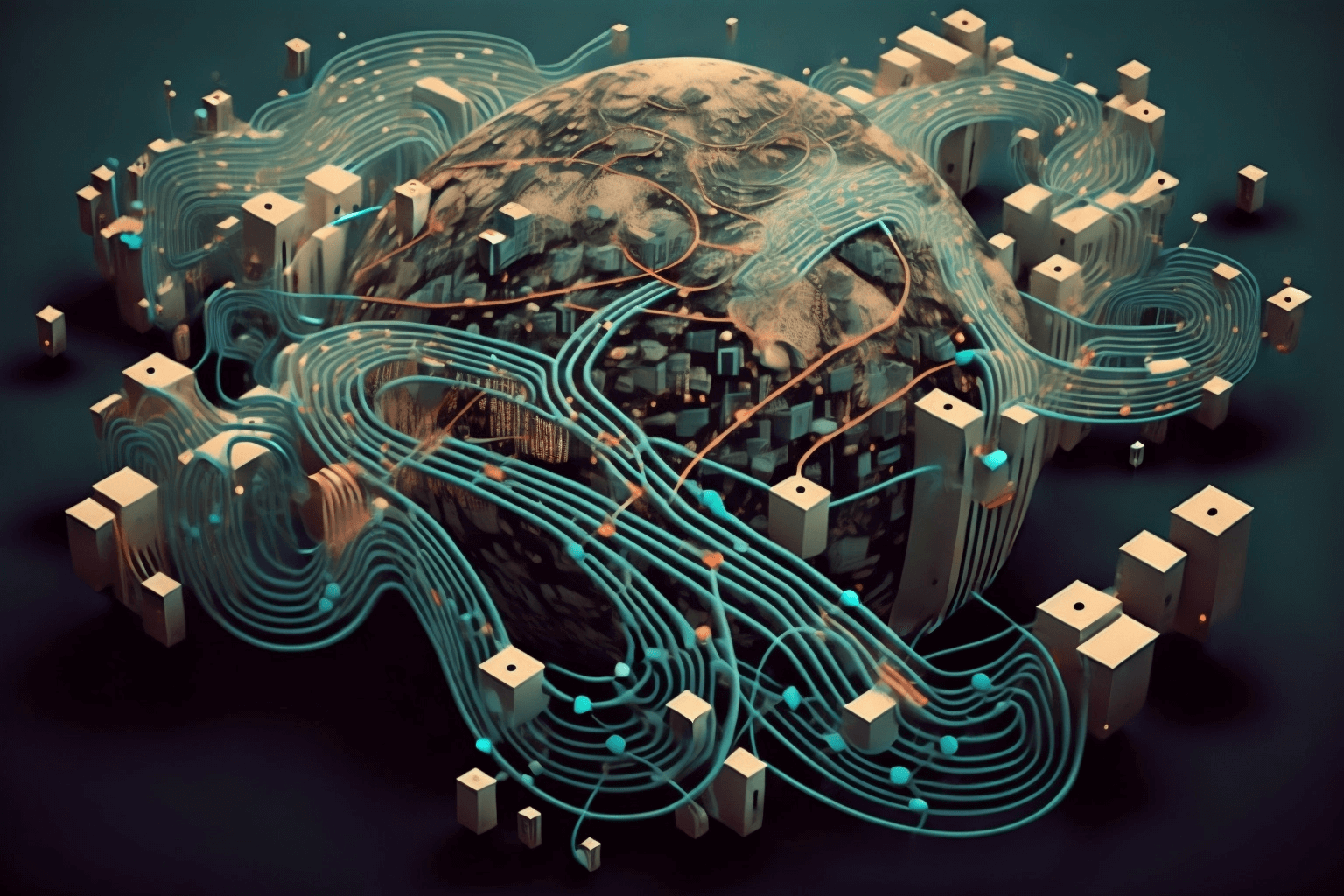

How Transfer Learning is Revolutionizing Generative Art

Transfer Learning is playing a pivotal role in the field of generative art, opening new avenues for creativity and innovation. Here’s how it’s being utilized:

Set up transfer learning in Python

To set up transfer learning in Python using Keras, you can leverage a pre-trained model like VGG16. Here’s a basic example to demonstrate this process:

from keras.applications.vgg16 import VGG16, preprocess_input, decode_predictions

from keras.preprocessing.image import img_to_array, load_img

from keras.models import Model

# Load the VGG16 model pre-trained on ImageNet data

vgg16_model = VGG16(weights='imagenet')

# Remove the last layer

vgg16_model.layers.pop()

# Freeze the layers except the last 4 layers

for layer in vgg16_model.layers:-4]:

layer.trainable = False

# Check the trainable status of the individual layers

for layer in vgg16_model.layers:

print(layer, layer.trainable)

from keras.layers import Dense, GlobalAveragePooling2D

from keras.models import Sequential

custom_model = Sequential()

custom_model.add(vgg16_model)

custom_model.add(GlobalAveragePooling2D())

custom_model.add(Dense(1024, activation='relu'))

custom_model.add(Dense(1, activation='sigmoid')) # For binary classification

custom_model.compile(loss='binary_crossentropy',

optimizer='rmsprop',

metrics='accuracy'])

# custom_model.fit(train_data, train_labels, epochs=10, batch_size=32)

img = load_img('path_to_your_image.jpg', target_size=(224, 224))

img = img_to_array(img)

img = img.reshape((1, img.shape0], img.shape1], img.shape2]))

img = preprocess_input(img)

# Predict the class

prediction = custom_model.predict(img)

print(prediction)

Remember, this is a simplified example. In a real-world scenario, you need to preprocess your dataset, handle overfitting, and possibly fine-tune the model further. Also, consider using train_test_split for evaluating model performance. For comprehensive guidance, you might find tutorials like those in Keras Documentation or PyImageSearch helpful.

Boosting Performance on Related Tasks

One of the most significant impacts of transfer learning is its ability to boost model performance on related tasks. By transferring knowledge from one domain to another, it aids in better generalization and accuracy, often leading to enhanced model performance on the new task. This is particularly evident in cases where the new task is a variant or an extension of the original task.

Transfer learning stands as a cornerstone technique in the field of artificial intelligence, revolutionizing how models are trained and applied. Its efficiency, adaptability, and wide-ranging applications make it a key strategy in overcoming some of the most pressing challenges in machine learning and deep learning.